On the planet of AI brokers that click on, scroll, execute and automate — we’re shifting quick from “simply perceive textual content” to “really use software program for you.” The brand new benchmark SCUBA tackles precisely that: how effectively can brokers do actual enterprise workflows contained in the Salesforce platform?

What makes SCUBA stand out:

- It’s constructed across the precise workflows contained in the Salesforce platform.

- It covers 300 process cases derived from actual person interviews (platform admins, gross sales reps, and repair brokers).

- The duties check not simply “does the mannequin reply the query” however “can the mannequin use the UI, manipulate knowledge, set off workflows, troubleshoot points.”

- It addresses a spot: present benchmarks typically deal with net navigation and software program manipulation — however enterprise-software “pc use” is tough to measure. SCUBA goals to fill that.

Key Takeaway: If you need brokers that don’t simply chat, however act in enterprise software program, it is a huge step.

The Enterprise Influence

Think about an AI assistant that may navigate your CRM, replace data, launch workflows, interpret dashboard failures, and assist your service staff get unstuck. That’s the imaginative and prescient this paper leans into.

Right here’s why it’s compelling:

- Enterprise alignment: Many benchmarks are tutorial or consumer-web oriented. SCUBA places the highlight on business-critical environments (admin, gross sales, and repair).

- Lifelike duties: By deriving duties from person interviews and real personas, it bridges the hole between “toy benchmark” and “stay person state of affairs.”

- Measurable agent efficiency in context: It allows analysis of how effectively an agent operates inside software program techniques, not simply by way of textual content.

- Roadmap for future AI assistants: As extra organizations undertake AI to automate software program use (not simply evaluation), benchmarks like this set expectations, spotlight challenges, and direct progress.

For companies like Salesforce (and their prospects) the implications are clear: higher agent tooling, fewer guide clicks, quicker concern decision, extra environment friendly gross sales/service groups. For the AI neighborhood: a brand new frontier of “process execution in UI” reasonably than “simply textual content reasoning”.

Key Insights:

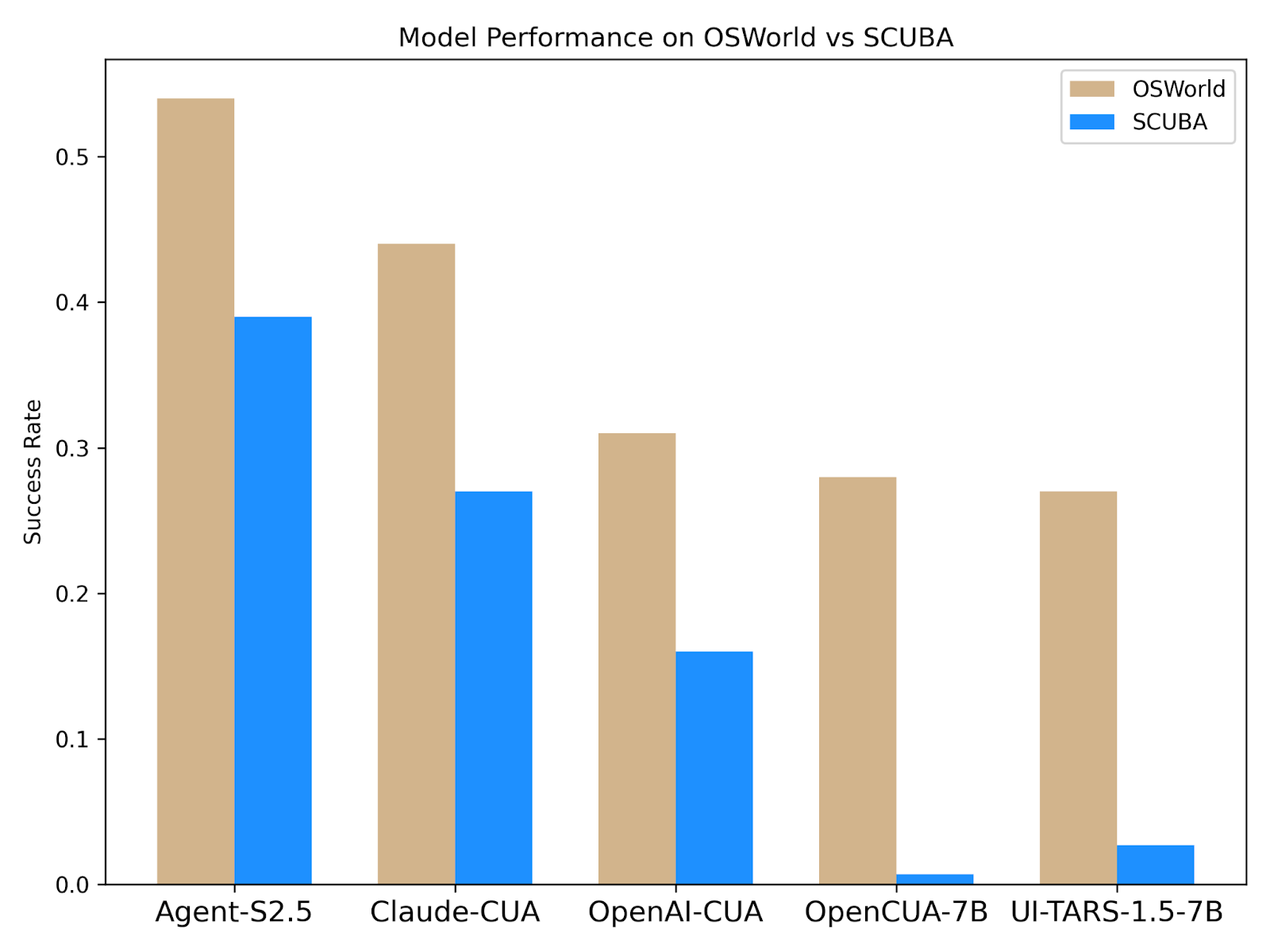

1. Actual-world area shift is tough

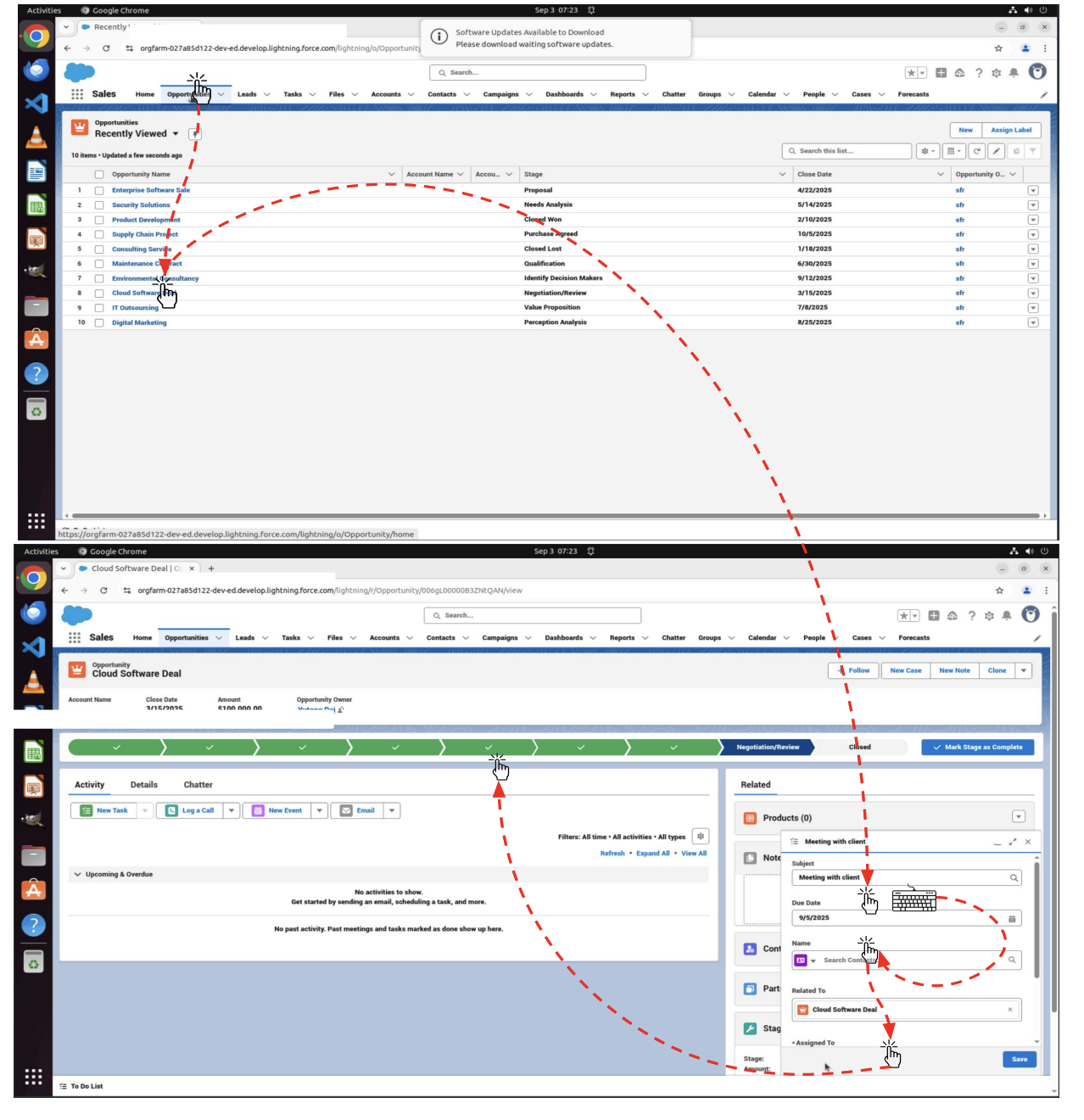

The efficiency drop when shifting from the extra generic OSWorld benchmark (which covers desktop functions) to SCUBA (CRM, enterprise workflows) is important. The experiment reveals a chart of drop in success charges when shifting the benchmark.

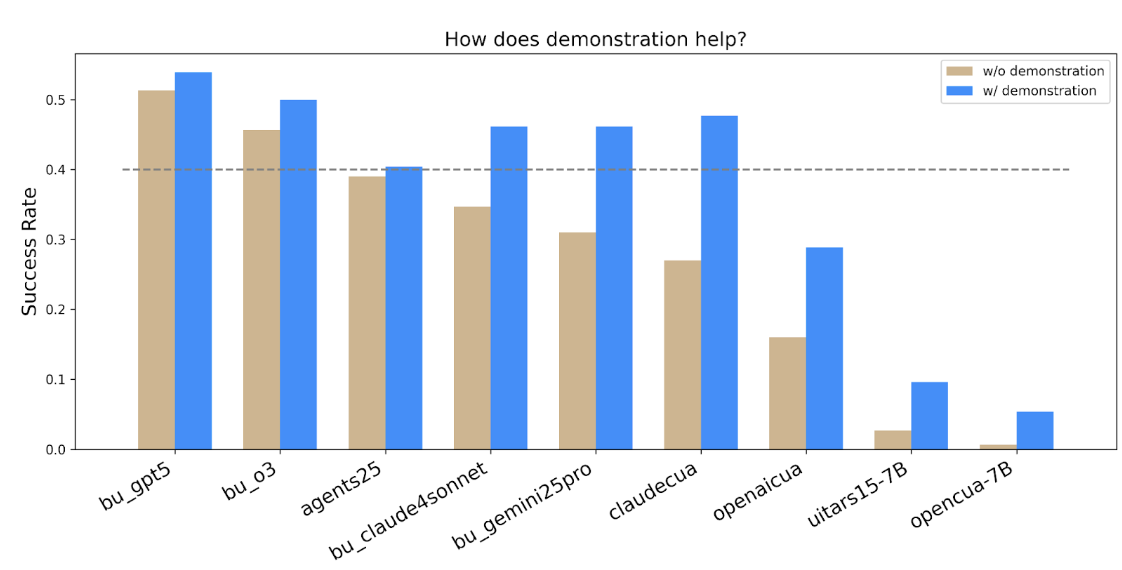

2. Demonstrations Assist

Data articles and tutorials on how one can use salesforce platforms are simply accessible. One pure query is whether or not AI brokers can leverage this info successfully like people do. The experiment outcomes reveal that

- Human demonstrations (exhibiting the agent how one can do the same process) improved efficiency throughout most brokers: larger success charges, decrease time, decrease token utilization (please see the technical report for extra particulars). However, some brokers didn’t profit as a lot.

- Additionally, some ended up utilizing extra steps in demonstration-augmented mode (for instance as a consequence of discovering “shortcuts” that the human demo didn’t present). So the design of demonstrations nonetheless issues.

3. Price, latency, and sensible deployment matter

- Success charge will not be the one metric; latency (time to finish duties) and price (API/token prices, variety of steps) are additionally reported. As an example, browser-use brokers had excessive success charges however larger latency (as a consequence of API service response time & multi-agent framework design).

- Demonstration augmentation not solely improves success however can scale back time and prices (the paper reviews ~13% decrease time, ~16% decrease price within the demonstration-augmented setting).

- For enterprise adoption, this issues: an agent that succeeds however is just too sluggish or too expensive could also be much less helpful in follow.