Greetings from “probably the most highly effective tech occasion on the earth!”

I’m writing to you from Las Vegas, the place I’m attending CES, previously the Client Electronics Present. That is the huge annual commerce present that showcases the following era of expertise.

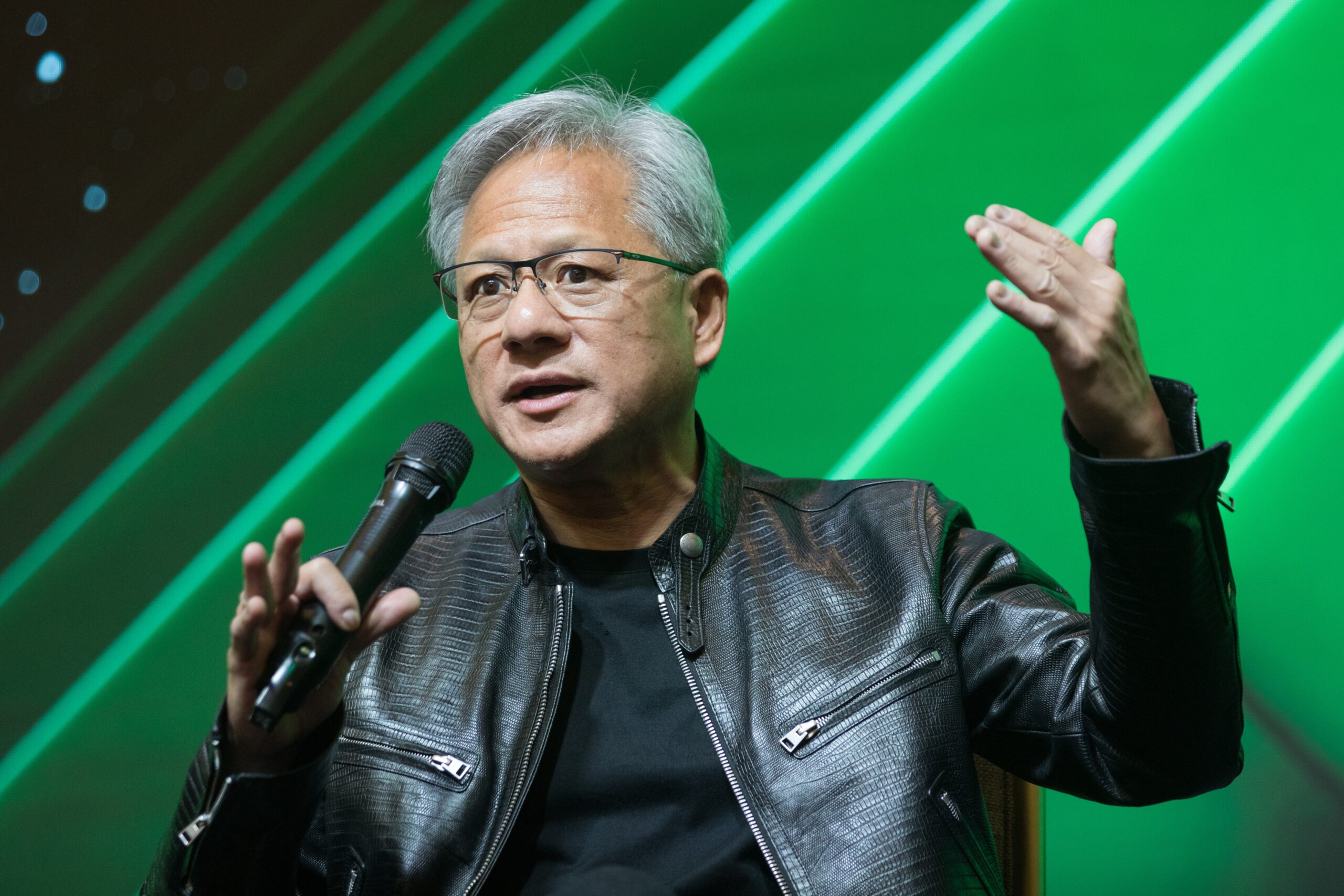

And I’ve already seen some wild issues. Together with this man right here…

Who I’ll save for a future concern.

However as we speak, I wish to cowl Jensen Huang’s keynote, similar to I did final yr.

I wasn’t capable of watch it reside as a result of I used to be attending the Boston Scientific and Hyundai keynote, though I’ll have an opportunity to see Huang communicate on the Sphere later this week.

Nonetheless, I watched each minute of his CES keynote as quickly as I received again to my lodge room.

And I don’t suppose it’s one thing we will afford to gloss over.

As a result of what he delivered was greater than only a product showcase. It was Jensen Huang telling us the place synthetic intelligence is headed subsequent…

And which corporations are positioning themselves to manage it.

From Cloud AI to Bodily AI

For a lot of the final two years, synthetic intelligence has been primarily based virtually completely within the cloud.

We’ve measured progress by mannequin dimension, coaching runs and what number of tokens a system can generate per second.

That part created huge worth. It additionally made Nvidia one of the necessary corporations on the earth.

However Jensen Huang made one thing clear at CES this week.

That part is ending.

The subsequent part of AI isn’t about producing phrases or photographs. It’s about methods that may understand the bodily world, purpose about it and take motion on it. And Nvidia intends to provide the computing platform that makes this potential.

That’s why Huang spent a lot time speaking about bodily AI in his keynote.

And it’s not simply speak. Throughout the keynote, he launched Nvidia’s subsequent main computing platform, Vera Rubin, which is able to enter manufacturing later this yr.

Picture: Nvidia

Vera Rubin is a full-system structure that mixes Nvidia’s customized CPU, next-generation GPUs, high-bandwidth reminiscence, networking and knowledge processing items right into a single rack-scale machine.

In layman’s phrases, it represents a shift from AI as software program to AI as an working system for bodily machines.

Based on Nvidia, a full Vera Rubin NVL72 system can ship greater than 3 exaFLOPS of inference efficiency. That’s greater than double what the earlier era delivered.

Extra necessary than that uncooked quantity is what it allows. These methods are designed to run huge AI workloads repeatedly, with decrease coaching prices and much larger throughput than earlier than.

And that’s an enormous deal as a result of bodily AI is compute-hungry in a method that cloud-only AI isn’t.

Coaching a language mannequin is dear. However coaching a system to drive a automotive, function a robotic or management industrial gear is way extra demanding.

These methods should course of sensor knowledge in real-time and simulate hundreds of potential outcomes earlier than performing. And so they should do it reliably, not as soon as, however each second of every single day.

Nvidia is aligning its whole platform round making that potential.

Huang additionally unveiled Alpamayo, a brand new reasoning-focused AI stack designed for autonomous autos.

Picture: Nvidia

The important thing downside for driverless autos is that seeing the world isn’t sufficient. Autonomous methods are likely to fail in uncommon conditions outdoors their coaching knowledge.

Nvidia is making an attempt to unravel that by pairing notion with reasoning, so autos can suppose by way of a state of affairs earlier than performing.

Mercedes-Benz plans to ship autos utilizing this technique in early 2026.

Nvidia paired that announcement with demonstrations of its simulation software program, which permits corporations to generate huge quantities of artificial coaching knowledge. With it, robots, autos and industrial methods might be skilled in digital environments earlier than they ever contact the true world.

Nvidia says these instruments are already being utilized by robotics corporations and producers to speed up improvement and scale back prices.

Taken collectively, Huang’s message from CES exhibits that — as soon as once more — he’s seemingly pivoting at precisely the proper second.

Nvidia is aiming to grow to be the working system for clever machines.

And the corporate can afford to make that guess as a result of its present enterprise is throwing off a unprecedented amount of money.

In its most up-to-date reported quarter, Nvidia generated roughly $57 billion in income, with knowledge middle gross sales dominating progress. These numbers have been pushed by cloud suppliers racing to construct AI infrastructure.

However cloud demand alone doesn’t justify the size of funding Nvidia is making now.

Bodily AI does.

Autonomous autos, industrial robots, logistics methods and clever factories characterize a a lot bigger and longer-lasting market than chatbots. These methods would require steady upgrades, ongoing coaching and large compute budgets.

And that modifications the economics. It additionally helps clarify Nvidia’s aggressive place.

As a result of constructing a quick chip is tough. Constructing an built-in platform that spans {hardware}, networking, software program, simulation and developer instruments is even tougher.

However as soon as corporations decide to that full stack, switching turns into pricey.

That’s the payoff Nvidia is banking on.

Right here’s My Take

Jensen Huang’s CES keynote wasn’t nearly exhibiting off new {hardware}.

It was about drawing a line between the AI period we’re dwelling by way of now and the one which comes subsequent.

This present one is all about fashions and cloud computing. However we’re rapidly transferring into a brand new part that’s all about machines performing in the true world.

Nvidia is constructing the management system for that future, and the size of that chance is bigger than something the corporate has pursued earlier than.

Huang’s CES keynote made it clear that Nvidia isn’t ready for this future to reach.

Regards,

Ian King

Chief Strategist, Banyan Hill Publishing

Editor’s Observe: We’d love to listen to from you!

If you wish to share your ideas or ideas in regards to the Every day Disruptor, or if there are any particular subjects you’d like us to cowl, simply ship an e mail to dailydisruptor@banyanhill.com.

Don’t fear, we gained’t reveal your full title within the occasion we publish a response. So be happy to remark away!