If you happen to’ve spent any time constructing with AI, you’ve probably skilled this.

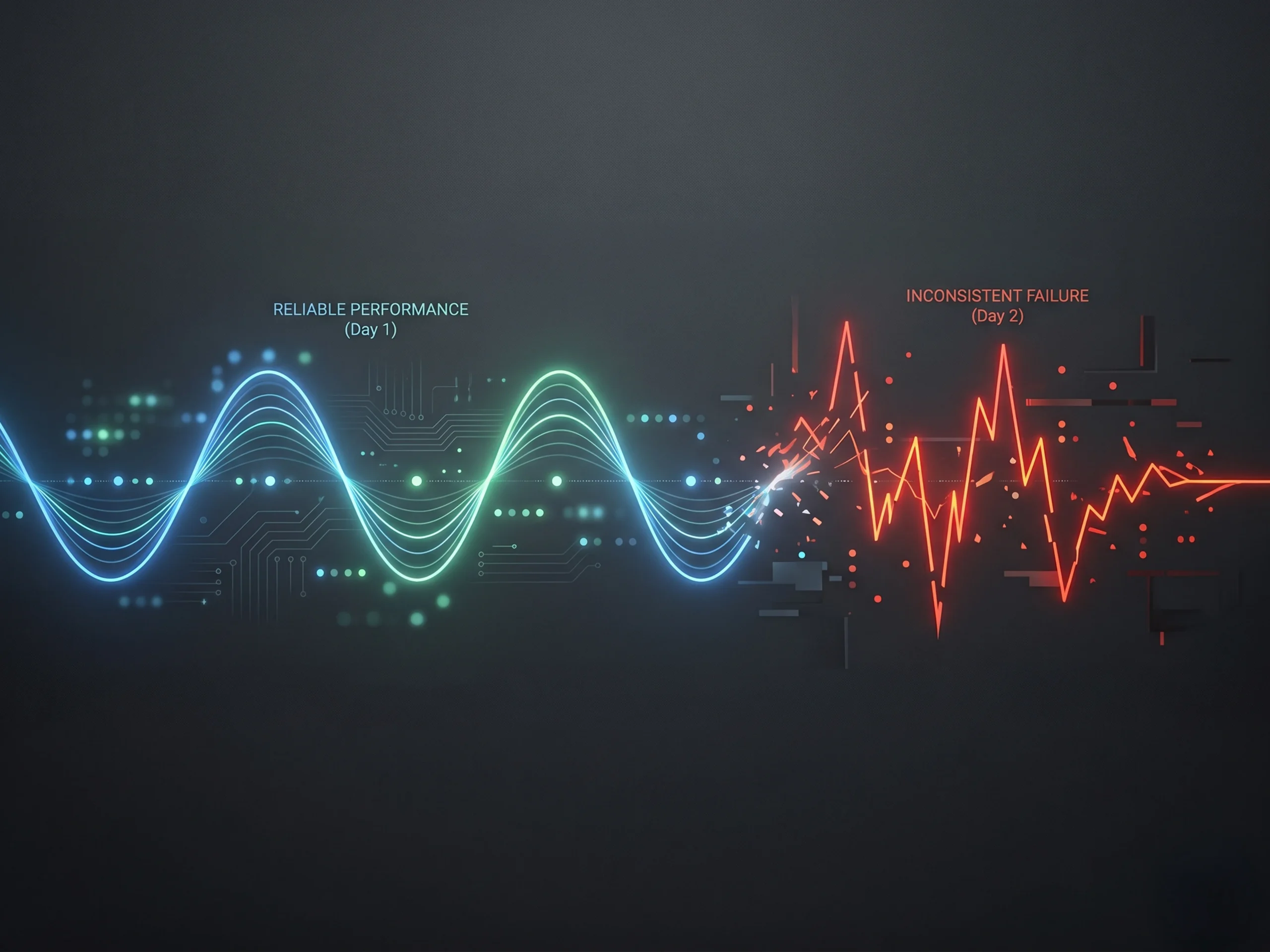

Sooner or later, the system feels unimaginable. It solutions questions effectively, generates helpful outputs, and begins to really feel like one thing you would truly depend on. The subsequent day, with a barely totally different enter, it misses the purpose totally. It hallucinates. Or it offers you one thing so generic that it’s unusable.

Identical mannequin. Identical instruments. Utterly totally different end result.

That inconsistency is what frustrates groups probably the most. It’s also what prevents many growth-stage firms from shifting AI from experimentation into actual manufacturing workflows.

At a current AIConf in Ahmedabad, Ravi Bhatia, Senior Software program Engineering Supervisor at Loopio, framed the difficulty clearly. The issue shouldn’t be the mannequin. It’s how you might be feeding it context.

The Hidden Variable Most Groups Ignore

When groups take into consideration enhancing AI efficiency, they often give attention to the plain levers like higher fashions, higher prompts, or extra options. However as Ravi Bhatia emphasised in his discuss, the true driver of efficiency is far easier and far more ignored.

It’s what info is definitely being handed into the system, and the way it’s structured.

As he put it, output high quality is immediately tied to context. Rubbish in, rubbish out.

That has deep implications. Each response is formed not simply by the query being requested, however by the whole lot surrounding it. Dialog historical past, retrieved knowledge, software outputs, reminiscence, and system directions all compete for consideration inside a restricted window. When that system shouldn’t be designed effectively, efficiency turns into unpredictable.

Why Efficiency Degrades as You Scale

Ravi Bhatia hung out outlining why techniques that work early typically break as they scale.

Most AI techniques carry out effectively initially as a result of they’re easy. Restricted inputs, slender use circumstances, and clear prompts create readability. However as firms develop their utilization, complexity will increase. Extra instruments are linked, extra knowledge is pulled in, and extra interactions are layered into the system.

At that time, groups usually fall into considered one of two traps.

Some overload the system. Each message, each software response, and each piece of information will get appended into the context. Prices enhance, latency slows, and accuracy drops because the mannequin struggles to focus.

Others present too little context. The system lacks the knowledge it wants, which ends up in hallucinations, irrelevant solutions, and wasted time. Bhatia known as out each of those failure modes explicitly, noting that they price groups not simply cash, however belief.

For growth-stage firms, that is typically the second the place confidence in AI begins to erode.

Extra Information Is Not the Reply

One of the necessary insights from Bhatia’s session is that extra info doesn’t result in higher outcomes.

In truth, as context grows, fashions turn out to be much less efficient at reasoning over it. Essential particulars get buried, earlier info is forgotten, and outputs degrade. He described this as context rot, the place the system technically has the fitting info however can’t reliably floor it.

The precept that follows is straightforward however highly effective. Fewer tokens, increased sign.

That is the place self-discipline exhibits up for growth-stage groups. It means choosing related instruments as an alternative of exposing each potential functionality. It means referencing paperwork as an alternative of loading total information. It means deciding what belongs in short-term context versus long-term reminiscence.

Bhatia used a useful analogy that resonates with technical groups. Context is your RAM. You wouldn’t load your total arduous drive into reminiscence, and the identical precept applies right here.

AI Is Now an Infrastructure Drawback

One other key level Bhatia made is that context is not only a high quality subject. It’s an infrastructure subject.

Each token has a price, and as context home windows develop, techniques turn out to be dearer and slower. He highlighted that as context will increase, computational complexity scales in ways in which immediately impression latency and value.

That is the place methods like immediate caching turn out to be important. In case your system construction is constant, you’ll be able to reuse massive parts of context at a fraction of the price. If it’s not, you lose that effectivity totally.

For growth-stage startups, this issues greater than it might sound. It impacts margins, pricing fashions, and the power to scale AI options sustainably.

The place the Finest Groups Focus

Ravi Bhatia additionally made it clear the place groups ought to focus in the event that they need to enhance efficiency shortly.

Retrieval.

Getting the fitting info on the proper time has an outsized impression on system efficiency. Most groups underestimate how nuanced that is. Key phrase search alone shouldn’t be sufficient. Semantic understanding is required to match intent, and the very best techniques mix each approaches.

He additionally highlighted structural challenges just like the “misplaced within the center” drawback, the place fashions pay extra consideration to info initially and finish of the context window than the center.

For growth-stage firms, enhancing retrieval is usually the very best ROI funding they will make in AI efficiency.

Why This Turns into a Management Challenge

As techniques scale, Bhatia emphasised that this stops being only a technical drawback and turns into a management one.

How disciplined is the workforce in how they construct? Are they measuring efficiency or counting on instinct? Have they got a transparent definition of what “good” seems to be like?

He cautioned towards dashing from demo to manufacturing with out correct analysis. As a substitute, he advisable constructing “golden units” of check circumstances that mirror real-world situations and utilizing them to constantly measure efficiency.

That is what separates groups that experiment from groups that scale.

The Backside Line

The explanation AI feels inconsistent shouldn’t be as a result of it’s unpredictable.

It’s as a result of most techniques feeding it are.

Ravi Bhatia’s core message was clear. If you would like AI to work constantly, it’s a must to be intentional about context. What goes in, what stays out, and the way info flows by way of the system all matter.

For growth-stage firms, this is likely one of the most necessary shifts to internalize. The groups that deal with context as a first-class drawback will construct techniques which can be sooner, extra correct, and less expensive.

As a result of in the long run, AI is not only about what the mannequin can do.

It’s about what you allow it to do.

To remain up-to-date on all upcoming York IE occasions, observe us on LinkedIn.